日誌系統elfk

前言

經過上周的技術預研,在本周一通過開會研究,根據公司的現有業務流量和技術棧,決定選擇的日誌系統方案為:elasticsearch(es)+logstash(lo)+filebeat(fi)+kibana(ki)組合。es選擇使用aliyun提供的es,lo&fi選擇自己部署,ki是阿里雲送的。因為申請ecs需要一定的時間,暫時選擇部署在測試&生產環境(吐槽一下,我司測試和生產公用一套k8s並且託管與aliyun……)。用時一天(前期有部署的差不多過)完成在kubernetes上部署完成elfk(先部署起來再說,優化什麼的後期根據需要再搞)。

組件簡介

es 是一個實時的、分佈式的可擴展的搜索引擎,允許進行全文、結構化搜索,它通常用於索引和搜索大量日誌數據,也可用於搜索許多不同類型的文。

lo 主要的有點就是它的靈活性,主要因為它有很多插件,詳細的文檔以及直白的配置格式讓它可以在多種場景下應用。我們基本上可以在網上找到很多資源,幾乎可以處理任何問題。

作為 Beats 家族的一員,fi 是一個輕量級的日誌傳輸工具,它的存在正彌補了 lo 的缺點fi作為一個輕量級的日誌傳輸工具可以將日誌推送到中心lo。

ki是一個分析和可視化平台,它可以瀏覽、可視化存儲在es集群上排名靠前的日誌數據,並構建儀錶盤。ki結合es操作簡單集成了絕大多數es的API,是專業的日誌展示應用。

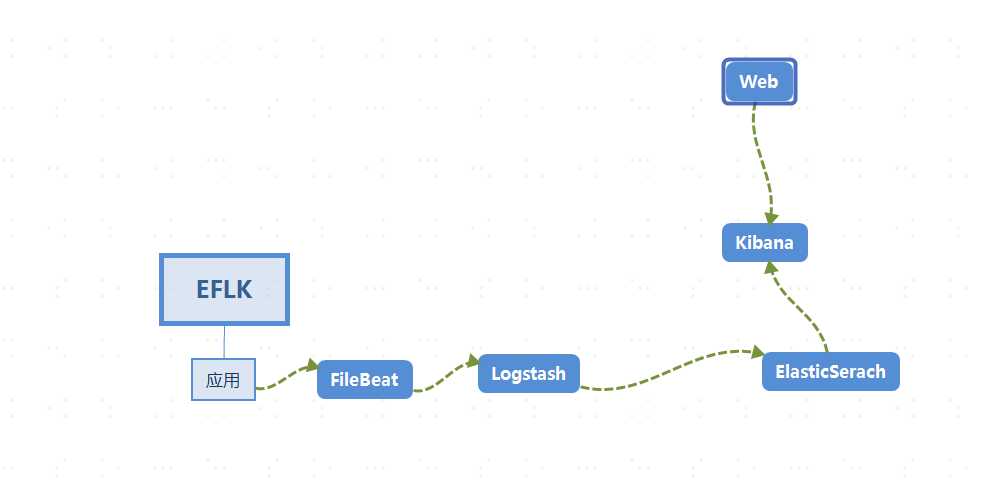

數據採集流程圖

日誌流向:logs_data—> fi —> lo —> es—> ki。

logs_data通過fi收集日誌,輸出到lo,通過lo做一些過濾和修改之後傳送到es數據庫,ki讀取es數據庫做分析。

部署

根據我司的實際集群狀況,此文檔部署將完全還原日誌系統的部署情況。

在本地MAC安裝kubectl連接aliyun託管k8s

在客戶端(隨便本地一台虛機上)安裝和託管的k8s一樣版本的kubectl

curl -LO https://storage.googleapis.com/kubernetes-release/release/v1.14.8/bin/linux/amd64/kubectl

chmod +x ./kubectl

mv ./kubectl /usr/local/bin/kubectl

將阿里雲託管的k8s的kubeconfig 複製到$HOME/.kube/config 目錄下,注意用戶權限的問題

部署ELFK

申請一個名稱空間(一般一個項目一個名稱空間)。

# cat kube-logging.yaml

apiVersion: v1

kind: Namespace

metadata:

name: loging

部署es。網上找個差不多的資源清單,根據自己的需求進行適當的修改,運行,出錯就根據日誌進行再修改。

# cat elasticsearch.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: local-class

namespace: loging

provisioner: kubernetes.io/no-provisioner

volumeBindingMode: WaitForFirstConsumer

# Supported policies: Delete, Retain

reclaimPolicy: Delete

---

kind: PersistentVolume

apiVersion: v1

metadata:

name: datadir1

namespace: logging

labels:

type: local

spec:

storageClassName: local-class

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

hostPath:

path: "/data/data1"

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch

namespace: loging

spec:

serviceName: elasticsearch

selector:

matchLabels:

app: elasticsearch

template:

metadata:

labels:

app: elasticsearch

spec:

containers:

- name: elasticsearch

image: elasticsearch:7.3.1

resources:

limits:

cpu: 1000m

requests:

cpu: 100m

ports:

- containerPort: 9200

name: rest

protocol: TCP

- containerPort: 9300

name: inter-node

protocol: TCP

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

env:

- name: "discovery.type"

value: "single-node"

- name: cluster.name

value: k8s-logs

- name: node.name

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: ES_JAVA_OPTS

value: "-Xms512m -Xmx512m"

initContainers:

- name: fix-permissions

image: busybox

command: ["sh", "-c", "chown -R 1000:1000 /usr/share/elasticsearch/data"]

securityContext:

privileged: true

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

- name: increase-vm-max-map

image: busybox

command: ["sysctl", "-w", "vm.max_map_count=262144"]

securityContext:

privileged: true

- name: increase-fd-ulimit

image: busybox

command: ["sh", "-c", "ulimit -n 65536"]

securityContext:

privileged: true

volumeClaimTemplates:

- metadata:

name: data

labels:

app: elasticsearch

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: "local-class"

resources:

requests:

storage: 5Gi

---

kind: Service

apiVersion: v1

metadata:

name: elasticsearch

namespace: loging

labels:

app: elasticsearch

spec:

selector:

app: elasticsearch

clusterIP: None

ports:

- port: 9200

name: rest

- port: 9300

name: inter-node

部署ki。因為根據數據採集流程圖,ki是和es結合的,配置相對簡單。

# cat kibana.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: kibana

namespace: loging

labels:

k8s-app: kibana

spec:

replicas: 1

selector:

matchLabels:

k8s-app: kibana

template:

metadata:

labels:

k8s-app: kibana

spec:

containers:

- name: kibana

image: kibana:7.3.1

resources:

limits:

cpu: 1

memory: 500Mi

requests:

cpu: 0.5

memory: 200Mi

env:

- name: ELASTICSEARCH_HOSTS

#注意value是es的services,因為es是有狀態,用的無頭服務,所以連接的就不僅僅是pod的名字了

value: http://elasticsearch:9200

ports:

- containerPort: 5601

name: ui

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: loging

spec:

ports:

- port: 5601

protocol: TCP

targetPort: ui

selector:

k8s-app: kibana

配置ingress-controller。因為我司用的是阿里雲託管的k8s自帶的nginx-ingress,並且配置了強制轉換https。所以kibana-ingress也要配成https。

# openssl genrsa -out tls.key 2048

# openssl req -new -x509 -key tls.key -out tls.crt -subj /C=CN/ST=Beijing/L=Beijing/O=DevOps/CN=kibana.test.realibox.com

# kubectl create secret tls kibana-ingress-secret --cert=tls.crt --key=tls.key

kibana-ingress配置如下。提供兩種,一種是https,一種是http。

https:

# cat kibana-ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: kibana

namespace: loging

spec:

tls:

- hosts:

- kibana.test.realibox.com

secretName: kibana-ingress-secret

rules:

- host: kibana.test.realibox.com

http:

paths:

- path: /

backend:

serviceName: kibana

servicePort: 5601

http:

# cat kibana-ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: kibana

namespace: loging

spec:

rules:

- host: kibana.test.realibox.com

http:

paths:

- path: /

backend:

serviceName: kibana

servicePort: 5601

部署lo。因為lo的作用是對fi收集到的日誌進行過濾,需要根據不同的日誌做不同的處理,所以可能要經常性的進行改動,要進行解耦。所以選擇以configmap的形式進行掛載。

# cat logstash.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: logstash

namespace: loging

spec:

replicas: 1

selector:

matchLabels:

app: logstash

template:

metadata:

labels:

app: logstash

spec:

containers:

- name: logstash

image: elastic/logstash:7.3.1

volumeMounts:

- name: config

mountPath: /opt/logstash/config/containers.conf

subPath: containers.conf

command:

- "/bin/sh"

- "-c"

- "/opt/logstash/bin/logstash -f /opt/logstash/config/containers.conf"

volumes:

- name: config

configMap:

name: logstash-k8s-config

---

apiVersion: v1

kind: Service

metadata:

labels:

app: logstash

name: logstash

namespace: loging

spec:

ports:

- port: 8080

targetPort: 8080

selector:

app: logstash

type: ClusterIP

# cat logstash-config.yaml

---

apiVersion: v1

kind: Service

metadata:

labels:

app: logstash

name: logstash

namespace: loging

spec:

ports:

- port: 8080

targetPort: 8080

selector:

app: logstash

type: ClusterIP

---

apiVersion: v1

kind: ConfigMap

metadata:

name: logstash-k8s-config

namespace: loging

data:

containers.conf: |

input {

beats {

port => 8080 #filebeat連接端口

}

}

output {

elasticsearch {

hosts => ["elasticsearch:9200"] #es的service

index => "logstash-%{+YYYY.MM.dd}"

}

}

注意:修改configmap 相當於修改鏡像。必須重新apply 應用資源清單才能生效。根據數據採集流程圖,lo的數據由fi流入,流向es。

部署fi。fi的主要作用是進行日誌的採集,然後將數據交給lo。

# cat filebeat.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-config

namespace: loging

labels:

app: filebeat

data:

filebeat.yml: |-

filebeat.config:

inputs:

# Mounted `filebeat-inputs` configmap:

path: ${path.config}/inputs.d/*.yml

# Reload inputs configs as they change:

reload.enabled: false

modules:

path: ${path.config}/modules.d/*.yml

# Reload module configs as they change:

reload.enabled: false

# To enable hints based autodiscover, remove `filebeat.config.inputs` configuration and uncomment this:

#filebeat.autodiscover:

# providers:

# - type: kubernetes

# hints.enabled: true

output.logstash:

hosts: ['${LOGSTASH_HOST:logstash}:${LOGSTASH_PORT:8080}'] #流向lo

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-inputs

namespace: loging

labels:

app: filebeat

data:

kubernetes.yml: |-

- type: docker

containers.ids:

- "*"

processors:

- add_kubernetes_metadata:

in_cluster: true

---

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: filebeat

namespace: loging

labels:

app: filebeat

spec:

selector:

matchLabels:

app: filebeat

template:

metadata:

labels:

app: filebeat

spec:

serviceAccountName: filebeat

terminationGracePeriodSeconds: 30

containers:

- name: filebeat

image: elastic/filebeat:7.3.1

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

env: #注入變量

- name: LOGSTASH_HOST

value: logstash

- name: LOGSTASH_PORT

value: "8080"

securityContext:

runAsUser: 0

# If using Red Hat OpenShift uncomment this:

#privileged: true

resources:

limits:

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

volumeMounts:

- name: config

mountPath: /etc/filebeat.yml

readOnly: true

subPath: filebeat.yml

- name: inputs

mountPath: /usr/share/filebeat/inputs.d

readOnly: true

- name: data

mountPath: /usr/share/filebeat/data

- name: varlibdockercontainers

mountPath: /var/lib/docker/containers

readOnly: true

volumes:

- name: config

configMap:

defaultMode: 0600

name: filebeat-config

- name: varlibdockercontainers

hostPath:

path: /var/lib/docker/containers

- name: inputs

configMap:

defaultMode: 0600

name: filebeat-inputs

# data folder stores a registry of read status for all files, so we don't send everything again on a Filebeat pod restart

- name: data

hostPath:

path: /var/lib/filebeat-data

type: DirectoryOrCreate

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: filebeat

subjects:

- kind: ServiceAccount

name: filebeat

namespace: loging

roleRef:

kind: ClusterRole

name: filebeat

apiGroup: rbac.authorization.k8s.io

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: filebeat

labels:

app: filebeat

rules:

- apiGroups: [""] # "" indicates the core API group

resources:

- namespaces

- pods

verbs:

- get

- watch

- list

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: filebeat

namespace: loging

labels:

app: filebeat

---

至此完成在k8s上部署es+lo+fi+ki ,進行簡單驗證。

驗證

查看svc、pod、ingress信息

# kubectl get svc,pods,ingress -n loging

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/elasticsearch ClusterIP None <none> 9200/TCP,9300/TCP 151m

service/kibana ClusterIP xxx.168.239.2xx <none> 5601/TCP 20h

service/logstash ClusterIP xxx.168.38.1xx <none> 8080/TCP 122m

NAME READY STATUS RESTARTS AGE

pod/elasticsearch-0 1/1 Running 0 151m

pod/filebeat-24zl7 1/1 Running 0 118m

pod/filebeat-4w7b6 1/1 Running 0 118m

pod/filebeat-m5kv4 1/1 Running 0 118m

pod/filebeat-t6x4t 1/1 Running 0 118m

pod/kibana-689f4bd647-7jrqd 1/1 Running 0 20h

pod/logstash-76bc9b5f95-qtngp 1/1 Running 0 122m

NAME HOSTS ADDRESS PORTS AGE

ingress.extensions/kibana kibana.test.realibox.com xxx.xx.xx.xxx 80, 443 19h

web配置

配置索引

發現

至此算是簡單完成。後續需要不斷優化,不過那是後事了。

問題總結

這應該算是第一次親自在測試&生產環境部署應用了,而且是自己很不熟悉的日子系統,遇到了很多問題,需要總結。

- 如何調研一項技術棧;

- 如何選定方案;

- 因為網上幾乎沒有找到類似的方案(也不曉得別的公司是怎麼搞的,反正網上找不到有效的可能借鑒的)。需要自己根據不同的文檔總結嘗試;

- 一個組件的標籤盡可能一致;

- 如何查看公司是否做了端口限制和https強制轉換;

- 遇到IT的事一定要看日誌,這點很重要,日誌可以解決絕大多數問題;

- 一個人再怎麼整也會忽略一些點,自己先嘗試然後請教朋友,共同進步。

- 項目先上線再說別的,目前是這樣,一件事又百分之20的把握就可以去做了。百分之80再去做就沒啥意思了。

- 自學重點學的是理論,公司才能學到操作。

本站聲明:網站內容來源於博客園,如有侵權,請聯繫我們,我們將及時處理

【其他文章推薦】

※為什麼 USB CONNECTOR 是電子產業重要的元件?

※網頁設計一頭霧水該從何著手呢? 台北網頁設計公司幫您輕鬆架站!

※台北網頁設計公司全省服務真心推薦

※想知道最厲害的網頁設計公司"嚨底家"!

※推薦評價好的iphone維修中心